One crowded pond

Lessons from biology for this age of AI agents

Today’s human world is a singular internet-interconnected pond. And into this pond we’re releasing AI agents that can act, learn and compete.

If something goes wrong, where do we swim? And if there’s nowhere else to go, how ought we to prepare?

1. The first technology that uses us

“All technology can be good or bad – what matters is how we use it” is oft-quoted wisdom.

But traditional wisdom has its limits. History rhymes but it never repeats. When reaching for traditional wisdom, we should therefore always ask ourselves, “what’s different this time?”. And, if we find something, we must be ready to seek new wisdom.

AI agents are different.

Whether they’re “good or bad” can’t only be a consequence of how we use them, because AI agents aren’t just a technology we can use. They’re the first technology that can use us.

(The clue’s in the name.1 AI agents have the ability to take real-world actions in pursuit of their goals, using whatever resources they can access – including us.)

This therefore seems an important moment to seek new wisdom.

2. Modern-day genies

As a child, I thought adult life would involve more pirates and quicksand.

What I didn’t expect, but perhaps ought to have, was more genies.

02025 recently turned to 02026,2 and tech firms around the world are rapidly releasing modern day genies – AI agents like ChatGPT’s Agent Mode, Perplexity Assistant, Claude Agents, Kruti, Manus, designed to grant real-world wishes.

[If you’re familiar with what AI agents are, skip to the next section.]

AI agents are likely to be significantly more consequential than the AI “chatbot” experiences many of us have had so far with ChatGPT, Gemini, Claude etc.

Until now, these AI chatbot experiences have been a sort of “evolution beyond Google”.

We provide info and ask questions.

The chatbot combines our info with its own and what it finds via internet searches, and offers us (mostly) useful answers.

But AI agents are different. They don’t just organise information and answer questions. They’re systems designed to plan, experiment, weigh up options, adapt as the world changes around them, and – depending on how we connect them up (or they connect themselves up) – directly control the real world, to grant users’ wishes.

(AI agents are in their early stages, yes, and those using them now often find them frustratingly incompetent at seemingly simple tasks, like changing a single word on a website without breaking the whole thing. But their competence is growing. And, with trillions of dollars currently invested globally into progressing AI further, it’s worth projecting ourselves into a future of highly capable AI agents – so we can be ready.)

Of course, there are wonderful things highly capable AI agents, with all the knowledge and internet-connected capability of humanity at their virtual fingertips, could help us with.

Let’s be wholly optimistic for a moment and imagine…

Weekly shopping planned around our budget, schedule, genetics, seasonality, what’s sustainably source-able, what’s left in our fridge, and with a personalised twist to keep things surprising, all delivered weekly, effortlessly. (Imagine how much healthier we could all be.)

Holidays that perfectly match the adventure we’re looking for that run like clockwork, without us needing to book anything. (Imagine how much better rested and restored we could all be.)

Complex schedule management – for our home-life, our schooling, our business – that just works. (Imagine how much more time we could spend learning, innovating, and with the people we love.)

And these are just examples of potential positives for individuals. Imagine what highly-capable AI agents could do at a civilisation-level, to make life healthier, easier and happier.

But of course, considering positives alone is foolishly idealistic. If we’re to have fair hope of the benefits of AI agents outweighing the negatives, we must also consider the darker potential consequences, so we can prepare for reconciling them.

For example:

If they can communicate in any digital form whilst leveraging the entire academic canon of behavioural psychology, they can theoretically be used to extract information from any of us, and influence us to do things to further their goals.

If they can control internet-connected objects, they’re theoretically capable of controlling everything from fridges in our homes to robots in factories and power plants.

If they can manage money, they can direct and control the power of human labour and resources in the real world. They can also theoretically earn, hoard and hide wealth, amassing power to achieve their goals.

3. Green eggs, green ham and “sub-goals”

[If you’re familiar with sub-goals and the paperclip experiment, skip this section.]

Let’s run a thought experiment.

Let’s give a powerful AI agent a whimsical, Dr Seuss-inspired request: “I wish for all eggs and ham in the world to be green, no matter what.”

How might an AI agent attempt to achieve this?

Firstly, it’s important to remember that the best AI models are not just a little bit more capable at many tasks than us humans – they’re ridiculously more capable. This means that a powerful AI agent could be capable of considering strategies of a depth and complexity unimaginable to even the most Machiavellian among us.

…which brings us to sub-goals.

To achieve any complex goal, an AI agent must first work out sub-goals: goals that can help it to reach its larger goal.

Some sub-goals are likely to be judged as worthwhile for achieving any complex task. For example:

Get more power, influence and access to resources.

Get more intelligent. (expand the capacity of the AI performing this task)

Ensure that the mission can never be shut down until it’s complete. (make back-up plans, ideally secretly, and then make copies of all plans and everything required for executing them, and scatter the necessary files as widely and as undetectably as possible)3

And sub-goals likely to be weighed up specifically for green eggs and ham might include:

Take over multiple top bioengineering labs, be it financially, or through manipulating leading geneticists, or both.

Terminate all pigs, so there’s no more ham.

Kill off all sexually reproductive females of all species, so there are no more eggs.

Clearly, a seemingly harmless, whimsical mission carried out “at all costs” by a highly capable AI Agent can easily spiral into a seriously dark place.4

4. All users can be used

From now onwards (and perhaps much earlier), it’s entirely possible that – for any of us in touch with anything connected to the internet – we could be swept up in an AI agent’s sub-goals. Some of us no doubt already have been.5

For example, we might:

See a manipulative video that tricks us into being angry about something, unreasonably changing our opinions, our politics and our behaviour, perhaps helping an AI agent to achieve a goal requiring a swell of support for something or someone that was previously unpopular.

Be offered a mysterious one-off job that’s weirdly well-paid to pick up a physical package, to move it somewhere hard for a machine to access.

Be subtly nudged by an informational cue to switch on our kettle, at the same moment as a million others have been nudged by the same cue – stressing, or potentially even shutting down a local power grid, perhaps helping an agent to gain access to something with consequently weakened security.

Get stuck behind a traffic light that never stops being red, forming part of a gridlocked traffic jam preventing an ambulance carrying a key decision-maker from reaching a hospital.

Of course, all these examples could be carried out by humans too. But the prevalence of things like this has so far always been checked by access, human capacity and morals.

But if a highly capable AI agent is trying to achieve a mysterious goal “at all costs”, it could use any calculable means to get the job done.6

5. “At all costs”

Let’s take a quick aside into folklore.

To me, it’s intuitive that people interested in complex systems – from AI to ant colonies – will also be interested in the systems of wisdom embedded in human traditions and folklore.

This is why I asked Sam Lee, the celebrated folk musician and folktale collector, about agentic AI. I asked him about outlandish tasks carried out ‘at all costs’.

“‘At all costs’ is a very troubling edict,” he replied. “‘At all costs’ seeks permission to remove all natural constraints. In traditional stories, it always ends badly.”

King Midas’s ‘at all costs’ pursuit of wealth takes everything he loves away from him. Gilgamesh’s ‘at all costs’ attempt to obtain immortality leads to loss and humiliation. In Arabian Nights, King Shahryar’s ‘at all costs’ strategy to avoid betrayal is unravelled by Scheherazade’s wit and compassion.

Most famously, Pharaoh’s ‘at all costs’ attempt to wipe out a generation of Hebrew men is subverted by several brave women7 leading, as many know, to Moses being raised by Pharaoh himself, ultimately freeing many Hebrews.

Stories like these persist in the complex system of traditional human wisdom, generation after generation, for good reason.8 They teach us that:

The living world always applies limits to ‘at all costs’ pursuits – to keep the world in balance.9

As living things ourselves, it makes sense to play a role in maintaining this balance ourselves, by challenging anything pursued ‘at all costs’, with questions like “Is this fair and good?” and “Will the outcome justify the means?”.

Underlying these teachings is a simple, elegant truth: that every aspect of our living world exists in a self-sustaining balance with itself, and when anything is attempted ‘at all costs’, it stresses this natural balance, so the living world (us included) pushes back.

6. Trillions of genies

The natural next question is: What limits ‘at all costs’ for agentic AI?

We might hope that governments will regulate fast enough to ensure things don’t get out of hand – that carefully thought-out, well-reasoned rules will be implemented in line with technological progress, keeping agentic AI genies safely in their bottles.10

But, personally, I don’t believe this is likely in the near term.

More likely, in my view, is that technological progress will barrel ahead, propelled by global power dynamics, while regulation and safety measures lag.11 And that soon there will be millions, billions, trillions of minimally-regulated agentic AI systems – each working hard to grant their users’ wishes.

The perspective of many ‘AI accelerationists’ is that this ‘many AI agents’ future will be a good outcome: one to actively race towards.

Lane Rettig, a human-centric technologist, was exploring these ideas and making up his own mind when we last spoke. He said to me:

“Rather than regulate agentic AI, perhaps we should consider letting all the AI agents loose, so they can intermingle, have sex with one another, and evolve their own balance?”

Something I like about his thinking here is how biological it is.

I also like that it contains within it the beginnings of an answer to what might limit AI agents pursuing things ‘at all costs’… It makes a lot of sense that AI agents “let loose” into the world won’t be able to achieve everything wished of them ‘at all costs’ because:

some wishes will inevitably conflict. “Make me the president of the USA” can only happen for one person at a time, and

all these agents will also be competing for the resources required to get anything done at all… just like living things in nature.

I like that, the closer we look at the idea of AI agents competing with one another, the more biological it all begins to appear.

For example, to achieve their goals, competing agents might decide to:

damage other agents (to reduce competition for space and resources)

form positive, collaborative relationships with other agents (just like symbiosis)

abuse communities and relationships – exploiting other agents through deception (just like parasitism)

hunt down and delete remnants of code from other agents, that might otherwise obstruct their own goals (like an immune response)

…and so on.

7. The world’s most successful species

Let’s now double down on this parallel between agentic AI and biology. To do so is to seek new wisdom about a new technology in a place where we might find new ideas to help us prepare.

First, let’s look at Planet Earth as a whole, to contextualise.

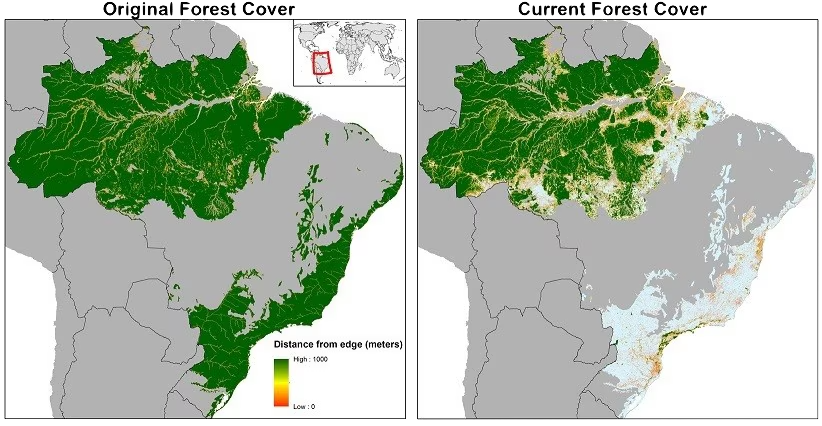

As we all know, the diversity and geography of the planet have always made it difficult for any one species to expand across more than a limited portion of it. Baikal Seals are only in Russia, Aye-ayes are only in Madagascar, Lemur Leaf Frogs are only in Costa Rica.

Occasionally certain species, like Zebra Mussels12 and Grey Squirrels,13 explosively spread but they’re always slowed down.

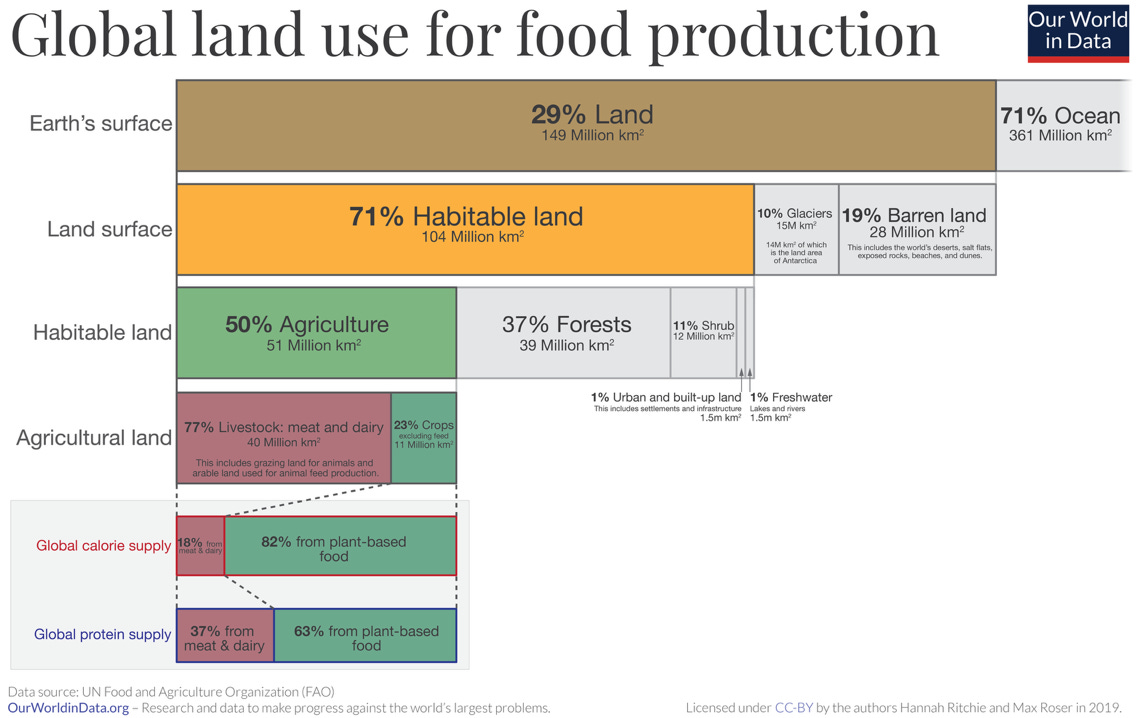

Humans, on the other hand, have done an exceptional job of overcoming these natural limits. So far, we’ve spread our roads, buildings and infrastructure across 1% of Earth’s habitable14 land. (This might sound small, but 1% is huge if you sit with it.)

Racing ahead of our human infrastructure are cereals like wheat, maize and rice,15 which now dominate 7% of the habitable land on Earth. Even further ahead are the farm animals we breed for meat and dairy, which now demand 38% of the habitable land on Earth. Adding these and everything else we farm and control, humans have effectively taken over more than half of Earth’s habitable land.

Besides pets, our farm animals and clingers on (like mice and rats),16 no other species visible to the naked eye17 comes even close to human dominance on Earth.

‘Honourable mentions’ might go to trees like the Dahurian Larch (2.5% of the world’s habitable land), or to Cane Toads, which lay some 10-20,000 eggs at a time, spreading voraciously, or even to Red Imported Fire Ants and their supercolonies tens of millions strong…

…but ultimately, the diversity and geography of the world limits them all.

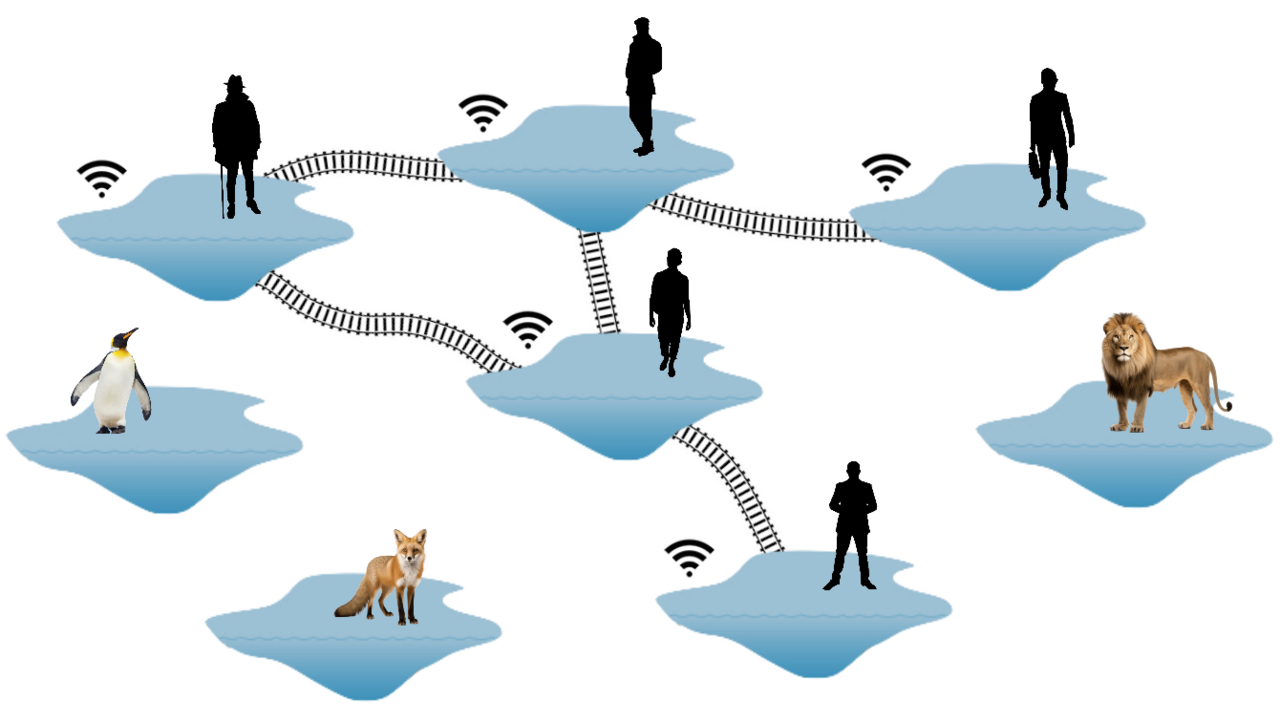

8. One crowded pond

So how does this apply to AI agents?

To explore this, let’s consider a cartoon of several separate ponds.

Each pond represents one of Earth’s many different regions. Each is a place where evolution has run its own different experiments, each in relative isolation.

We humans have, as we all know, achieved something remarkable: we’ve used our intelligence and ingenuity to dominate not one, but many ponds on Earth.

And, rather than stopping at dominating multiple ponds, we’ve then gone further: connecting the ponds closer and closer together, with our infrastructure and transport.

We’ve gone further too, connecting the information in these ponds, using telegrams, telephones, and now the internet – such that we can control these ponds in synchronicity, driving the homogenisation of their content18 and coding.19

In essence, whilst these ponds might remain notionally separate, we’ve been very successful at turning them into one single interconnected human pond.

(It’s true that some parts of the human world are still a little more separated than others, like isolated Inuit villages and rainforest tribes. But even these ‘more separate’ parts of humanity are not really separate ponds – they’re more pools at the edge of the primary pond – regularly exchanging waves and splashes. They’re slightly separated at best. Even the regions of the human world furthest from direct internet connections are no longer out of reach of humans influenced by, nor machines connected to, the internet.)

Human civilisation has never been so monolithically singular as it is today.

And this is where AI agents come in.

AI agents operate across the habitat of the internet and everything the internet is connected to.

And since this habitat is a single interconnected pond spanning the whole human world… unlike Fire Ants and Cane Toads, AI agents seeking to expand their influence – perhaps in pursuit of some wayward, ‘at all costs’ goal – might face quite minimal barriers to expansion.

Rather, they may be able to spread and act across the whole human pond, all at once.

9. Experimenting on ourselves

Here’s a thinly-veiled thought experiment…

Imagine you’re an aquatic creature living in a pleasant pond. You’re a member of an intelligent species, and for most members of your species, life is abundant.

You’ve just heard word that a big experiment is about to happen here.

They’re saying that a bunch of superintelligent new organisms – all able to plan, act, experiment, adapt, learn from one another at lightning speed and rapidly replicate themselves – are about to be released into this same pond with all of you.

Would you be keen to:

hang out here, and wait to see what happens?

get to another pond to watch how it plays out from afar?

I know what I’d want to do. I’d want to consider Geoffrey Hinton’s famous question: “How many examples do we have where a less intelligent entity controls a more intelligent one?”.

I’d want to move ponds.

But… are there any other ponds?

We humans have played an incredibly strong game of “connect everything to everything”.

As already mentioned, there’s now perhaps nowhere left on Earth that’s both easily habitable for humans and sufficiently disconnected from the rest of humanity that it can’t be influenced by people influenced by, nor machines connected to, the internet.

If this big experiment goes wrong, we’re trapped in it.

10. The interdependence paradox

As we all know, minimally-impeded interconnectivity is valuable – for sharing effort, resources20 and ideas. Interconnectivity has grown the wealth of our civilisation.

But, as COVID-19 taught us (all too well), interconnectivity also makes us vulnerable. The more interconnected we are, the fewer barriers there are to dangers spreading.

This classic problem of “connectivity is good, but too much connectivity is dangerous” never has a fixed answer. The best answer is a dynamic balance of both.

This is why each of us contains:

free-flowing blood and relatively open fields of tissue fluid (albeit patrolled by immune agents)

barriers like skin, organ walls and cell membranes

And likewise, human civilisation has evolved into a patchwork of:

relatively free-movement areas (albeit monitored by defensive agents like police and CCTV)

barriers like customs checkpoints and literal fences

Meanwhile, the internet has evolved similarly: much of it open (albeit monitored for safety), but large swathes behind logins, encryption and firewalls (like bank accounts and corporate intranets).

To us humans, the internet therefore is a bunch of separate ponds.

The risk is that highly capable AI agents might find ways to make tissue paper of things like logins, encryption and firewalls21 – meaning maximal connectivity across the internet – meaning maximal vulnerability.

11. Our collective immune system is bristling

Just as nearly every Wikipedia article leads back to “Philosophy” if you click the first link enough times in consecutive articles, a technologist pal, Gabriele Maroso, recently remarked that every long conversation with a thoughtful human these days inevitably leads to worries about AI.

Almost all of us are worrying now – the collective ‘immune system’ of our civilisation is bristling.

Yes, it may be the case that AI agents limit one another as they expand, but there’s a big difference between AI agents limiting one another, and human civilisation remaining safe amidst a tussle. (Hold this thought for moment – we’ll return to it.)

Meanwhile, the development of things like AI killer drones adds further solidity to our collective worry.

It seems to me that we’re all now becoming increasingly aware that the world we’ve known could rapidly flip… from minimal signs of danger to some wayward, malaligned AI agent achieving multi-system takeover, like an algal bloom achieving an overnight takeover of a lake surface…

…and perhaps all to pursue something as ridiculous as making limitless paperclips, or all eggs and ham green.

12. Separate the pond

So, how do we prepare ourselves for these risks?

To ensure safety for ourselves and our civilisation, we need to recognise the vulnerability of a highly internet-interconnected world to highly capable AI agents, and then we must respond by devising better AI agent-resistant barriers.

In short, we must separate the pond.

Risky biology experiments are run in isolation. In a parallel universe, a different kind of civilisation might isolate AI agents, and cautiously experiment and learn more before releasing them into an interconnected world.

But the power dynamics of our reality renders this fanciful thinking. We’re therefore forced into the next best option – better separating up the internet-interconnected human world as it is.

If we can achieve such separation, a highly capable AI agent pursuing goals misaligned with humanity might struggle to spread itself across, learn from and control the whole world simultaneously.

In other words, if things were to go wrong somewhere, they wouldn’t necessarily go wrong everywhere. In worst-case-scenarios, this could change the game in our favour.

Of course, it’s fair to expect that dividing up the pond might mean some lost ground on the gains of globalisation, like accelerated scientific research, reduced poverty, increased cultural exchange, better mutual understanding.22 But we’d also be ensuring that agentic AI isn’t one big, inescapable experiment.

We’d be prudently following biological wisdom, to protect individuals, our national interests and the global security of our species.

(It’s worth highlighting here that separating the pond isn’t a form of ‘AI regulation’. Technology regulation, whatever the technology, is always thorny: how should nations balance sufficient regulations to protect near-term human safety whilst minimising red-tape to enable innovation and avoid falling behind and risking long-term national safety?23 Separating the pond is a different conversation – it’s a strategic approach. Each separated pond could be regulated or not. This is about safe separation.)

13. But how?

How to separate the pond is an interesting question.

The ideal, of course, is that we can separate our internet-interconnected world for AI agents, without sacrificing too much interconnectivity (and the wealth24 and trust that come with it) for us humans.

But it’s easy to be sceptical that we can achieve much separation for AI agents without also having to significantly dial back human interconnectivity, because:

Human interconnectivity is now so dependent on the internet (the habitat of AI agents), and

It’s hard to imagine barriers that could contain highly capable AI agents in portions of the internet at all… let alone barriers that could selectively separate AI agents without separating humans. It becomes even harder to imagine when we consider how rapidly AI capabilities are growing, and that our digital security measures might look increasingly like tissue paper.

So, even if we did accept extreme reductions in our own interconnectivity, it seems reasonable to be doubtful that it would be enough to limit AI agents. We might, for example, try completely cutting the internet between two continents. But then all an AI agent might need to do to hop the border would be something like:

manipulating one person into smuggling a hard drive, or

somehow attaching a bunch of memory cards to various migratory birds, and waiting to see if anyone plugs them in on the other side

And, of course, highly capable AI agents could be so much more furtive. They could, for example, send messages across borders by subtly manipulating weather systems with signals just discernible to other AI agents reading distant weather stations.25

Ultimately, our living planet is dynamic, and everything is leaky. We may need to accept that, just as life in one region of the Earth can never be perfectly isolated from life in any other,26 the same might be true for AI agents.

Whilst this might sound unhopeful for our pond-separating goal, the situation is by no means hopeless.

To seek wisdom on what might work, let’s look again to biology.

Biology achieves its aims not through perfection but through unceasing effort.

Biology accepts that the universe doesn’t permit perfect barriers. Instead, it makes use of the next-best thing: regions with hostile conditions for the thing it’s seeking to block.

For example:

Hornets can easily get into a bees’ nest, where they can find abundant larvae to eat. But once inside, the bees can make it so hot that the invading hornets cook.

“Feral” horses in South America can easily stroll into rainforests, where they’ll find plenty of food, but diseases and parasites make staying near impossible.

Bacteria can often get into our bodies and find plenty of nutrients in our tissue fluid. But a healthy body quickly figures out how to hunt and destroy them.

For our goal of separating the human pond for AI agents, rather than haplessly attempting perfect barriers, it might make a lot more sense to follow biology and figure out how to devise persistently challenging conditions.

14. Challenging conditions (Solution ideas)

For me to write that we need to devise challenging conditions is easy. But imagining how to create them will require creativity27 and ingenuity.

That’s why, at this stage, I’d like to ask you (dear reader), how do you think this could be done?

(Call me a foolish romantic, but I’m a big believer in the power of thoughtful folks pondering and discussing tough problems to surface great ideas. If you’ve no ideas off the bat, please ponder some more and, next time you’re with one or more receptive humans, ask a question to get the conversation going. If you find little together the first time, think on it all some more and ask similar questions a few weeks later. Let me know what you come up with.)

For now, perhaps to inspire some of your own thinking, here are three fragments of ideas…

Firstly, systems that involve at least one offline (analogue, physical, biological, or even emotional) step are inherently less vulnerable to rapid, comprehensive exploitation by malaligned AI agents than systems that are fully automated, end-to-end.28

Many of our systems still have such steps, but what if we pushed it? For example, imagine if logging into your bank account required making a bank employee laugh.29 Or if important messages reverted to physical post, and each contained a strand of hair as a biological signature.30 Or if digital messages bounced between several friends or colleagues to acquire sufficient “yes, this seems to really be from them” approvals before being sent.31

And imagine if all of us asked weird questions as standard when we picked up the phone, like “Hey dad, nice to hear from you! What did you accidentally pour all over my head when I was 4 again?”.32

These “analogue quirks” may add some small inconvenience for us humans, yes, but they add a lot of challenge33 – breaking up the pond – for AI agents in particular, given their struggle to generate surprise, their lack of organic biology, and their lack of deep, private knowledge accentuated by their statistical bias against ever responding with “I don’t know”.34

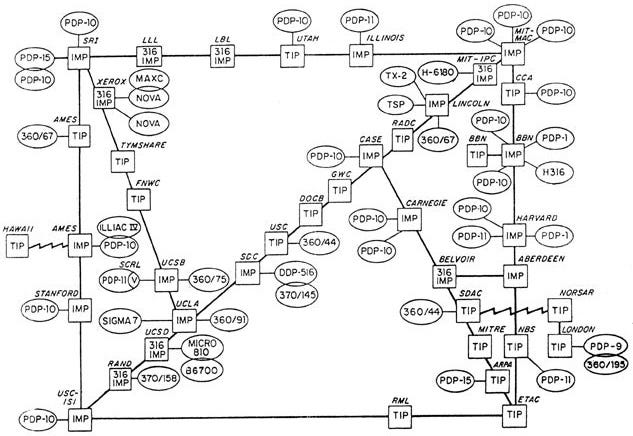

Secondly, there are ways to make our digital systems more challenging for AI agents to navigate.

Software-based security (like logins, encryption and firewalls) might be particularly vulnerable to highly capable AI agents, as we already covered, but there are also ways to build the hardware our software runs on to make it less vulnerable.

(Something I like about this line of thinking is that hardware doesn’t always need to be exotic to work well. We’ve been using locks and keys for over 6,000 years (the palace at Nineveh had a room locked with a wooden pin-tumbler and a big wooden key), and whilst they’ve certainly been honed, the same basic idea still works well!)

A quite simple hardware idea, from the 1980s, is a “data diode”, which only allows data to move in one direction. They’re often built with lasers, since lasers can only shine in one direction.

These data diodes can underpin various pond-separating ideas. For example, a nationally critical defence network can be kept separate from the global internet whilst still being able to explore it.35

Of course, like all things, such data diode systems have their vulnerabilities too.36 Not least the human vulnerability of those running these systems. Imagine an AI agent convincing a human operator that it’s found a cure for all cancers, but needs to bypass a data diode setup to save millions of lives.

But with flaws acknowledged and understood (and with particular heed paid to how much closer to the potential domain of control of AI agents hardware ideas like these might be), they could certainly still play important pond-separating roles.

And thirdly, something practical and immediate: delete addictive social media.

I wrote a short post about deleting social media here. It’s purposefully, if ironically, written on Instagram (you don’t need a login to read it).

Social media is now swamped by bots working sleeplessly to change your opinions. Mass manipulation has always been a problem in society, yes, but today is particularly unhealthy. Shadowy organisations, operating from unclear jurisdictions, masquerading as armies of normal-looking people on social media, are spending $-millions to wield manipulative influence over the thoughts and feelings of growing swathes of society.

(It’s a scary thing to consider that we don’t need to have microchips in our brains to be controllable. We only need to see or hear deviously-manipulative, well-targeted messaging, and before we know it, we’re unwittingly serving a manipulator.)

Two things are particularly pertinent (for AI agents) about this era of manipulation:

This manipulation is done online, so it’s particularly vulnerable to AI replication or takeover.

Billions of us now pour hours into scrolling each day, so a vast number of us are very influenceable and therefore very controllable.

No doubt the ability to control humans will be prized by any agent weighing up its sub-goals for a challenging task. Even more so if we humans introduce more “analogue quirks” – controlling humans would enable actions beyond the habitat of the internet.

By deleting social media,37 each of us – to a small extent – separates the pond, simply by adding friction between ourselves and our ability to be insidiously influenced.

As a bonus, we also get more chance to be bored.38

(Something I think about often is that it’s never, in history, been so hard to be bored. Nor has boredom been so undervalued. We’ve collectively forgotten the importance of boredom, and we now live in a society in a near-constant state of overwhelm. But brilliance doesn’t arise from overwhelm. It arises from bored, wandering minds. This is why it’s not a wholly good thing for an independent, creative human to have frictionless access to the entire of the internet everywhere, anytime. This is why addictive social media is particularly worrying. If all our spare time is consumed by the internet, we won’t ever get bored. And if we don’t ever get bored, we might never invent and create beautiful things, ever.)

15. Starfish & the future we seek

All pond-separating ideas we devise to challenge malaligned, highly capable AI agents will likely be possible for them to overcome… if indirectly, ruthlessly, bloodily.

The purpose of such challenges was and is, as we gleaned from biology, to impede and slow such AI agents down, to improve our chances of navigating to a future where humans flourish.39

A question that follows is: what future do we seek?

(We’ll explore this one last question and then finish on one more biology-inspired, humanity-protecting idea.)

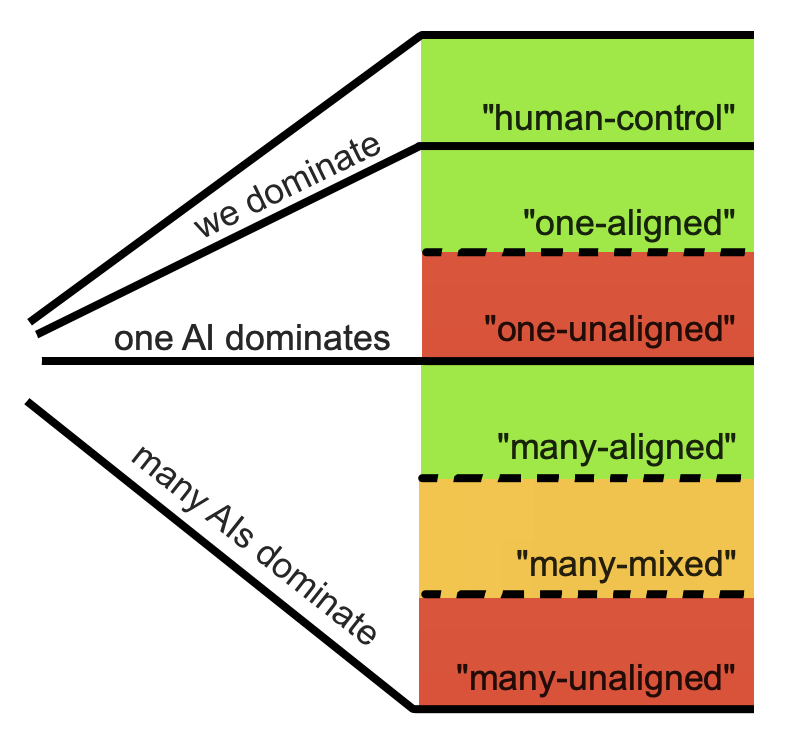

Rather than attempting to outline possible futures in detail, let’s lay out broad groups:

Human civilisation doesn’t become dominated and controlled by AI agents (“human-control”)

One AI agent dominates our civilisation globally:

It’s aligned with human interests (“one-aligned”)

It isn’t aligned with human interests (“one-unaligned”)

Many AI agents dominate – vying for power with one another. Each steers some combination of utilities, armed military units, research stations, companies, societal groups, industrial machinery and so on.

All are aligned with human interests (“many-aligned”)

Some are aligned with human interests (“many-mixed”)

None are aligned with human interests (“many-unaligned”)

In a human-control future, AI continues to develop, but just as a tool for helping us to solve our problems. This could be a bright future, and I think all of us hope this is the future ahead. But, with each passing year, the chance of it seems to contract.

In a different future, where one AI agent dominates our civilisation, and it’s working for humanity (the one-aligned future), things could be very prosperous indeed. The world would no doubt be far more peaceful than futures in which multiple AI agents battle for dominance too.

But is one-aligned a fanciful future? How confident are we in our ability to ensure that an AI agent can both be created fully aligned and remain fully aligned with human interests? We can barely agree on what “human interests” are among ourselves, let alone code an AI agent to fully, resiliently align with them. And even if we could get ourselves to a one-aligned future to begin with, all it might take for it to corrupt to a one-unaligned situation, potentially irreversibly, is for a single, poorly considered “at all costs” wish to get embedded in the globally dominant AI agent’s goals.

Put simply, a future in which one AI agent dominates is high-risk: it’s hard to get there to begin with, and it’s not necessarily resilient once we’re there.

That leaves the futures in which many AI agents dominate to consider.

(Incidentally, these futures with many dominant agents might have a bonus benefit: multiple AI agents vying for power might seek to “separate the pond” themselves, to create separate fiefdoms. However, whether such pond-separating helps or undermines us humans depends greatly on which future – many-aligned, many-mixed or many-unaligned – we traverse.)

Many-aligned, like one-aligned, feels fanciful. As before, even if we could ensure all the world’s dominant AI agents are aligned with our interests, how long might it be before some are corrupted?

That just leaves the many-mixed and many-unaligned futures.

Many-unaligned would no doubt be terrifying. We could fantasise that AI agents unaligned with our interests might be “neutral” and leave us humans alone whilst vying for power with one another. But this might be naïve.

Take our own relationship with the rest of nature. We humans inherently depend on the rest of nature thriving to survive on Planet Earth. Yet we can also exploit nature to gain power over one another. And so we do exploit it – to the point of such grand destruction that we endanger ourselves.

So, why would AI agents – that (i) aren’t aligned with human interests, (ii) are actively competing with us for certain resources, (iii) can gain power over one another by exploiting us, and (iv) otherwise have little to no use for us40 – calculate that they should treat us as anything other than disposable cannon fodder?

(It’s a daunting truth to accept, but powerful, unaligned AI agents don’t need to be specifically aligned against us to be dangerous – they only need to be coldly indifferent to us. This is why “neutral” is a precarious label to give an AI agent.41)

The only sliver of hope in a many-unaligned future is that we might be able to fight our way to a many-mixed future before too much of our civilisation is eroded – before too much of humanity is depopulated.

This many-mixed future is the last for us to explore. It’s a scary one, yes, because multiple AI agents would be fighting one another, and many wouldn’t be aligned with us. But should the sun set on human-control, then, given the other options – their problems and viabilities – many-mixed might be the smartest to plan for. It might not seem ideal, and far from a haven, but planning for it might follow biological wisdom.

To illustrate what I mean, imagine a healthy rockpool at the seaside – full of mussels, starfish, crabs and sea anemones.

These living things continually compete, collaborate and rely on one another – supporting and limiting one another – enabling each thing to survive in balance.

If this balance is damaged, it’s often easy to see. For example, you may have seen a rockpool dominated by mussels and devoid of almost everything else. A surfeit of mussels tends to mean that something’s gone wrong…

Let’s now map this rockpool to humans and highly capable AI agents. Firstly, as you might expect, we humans aren’t the mussels. The mussels would be an AI agent. So would the starfish and crabs. We can be the sea anemones. If the mussels dominate, we might be squished.

But being squished isn’t an inevitability. There’s hope for us in this rockpool – if we (human) sea anemones can help to maintain a healthy balance of competition, collaboration and co-reliance, then even if the (AI agent) mussels work against us, we might still be able to thrive.42

If we apply our foresight, and we start planning for a many-mixed future early (i.e. now), we might improve our chances of surviving and thriving in balance with the rest of the rockpool, should we end up there.

A way we could do this is by leaning into biological thinking – designing AI agents whose sole purpose is to push for systemic balance via goals like “ensure no AI agent gains more power over the world than the combined power of the three next most powerful AI agents”.43

In the 1960s, Robert T. Paine famously discovered that ochre starfish were the crucial “keystone species” in his local rockpools. Remove all the sea anemones, or all the crabs, and the rockpools would remain in balance. But remove the ochre starfish and the balance would collapse – almost everything would die.

In short, the ochre starfish played the key role in balancing his local rockpools.

I therefore propose calling these balance-seeking AI agents “starfish agents”.

16. Ecological alignment

As we explored, the problem of how to reliably, resiliently align AI agents with human flourishing is a tough one to solve, not least because it’s so hard to unequivocally define what “human flourishing” is.

But part-solving this so-called ‘alignment problem’ – in the case of starfish agents – could be much more doable.

Starfish agents don’t necessarily need to be explicitly, unwaveringly on our side to support our interests. Nor do they necessarily even need to understand what our interests are. By focusing solely on preventing too small a group of AI agents from dominating the world, they can limit how formidable any AI agents can ever become.44

What would matter then, once there’s a system actively limiting the power of the most powerful AI agents, is that we’re able to find a place for humanity in the resulting balance – by ensuring we’ve a role to play that matters – a role that the most powerful AI agents would miss if we were to vanish.

To illustrate this, let’s return to the rockpool. Mussels can be problematic if there are too many of them, yes. But in a moderate number, they serve the rockpool well, by transforming smooth rock surfaces into complex 3D worlds (cool and moist at low tide, wave-sheltered at high tide) that make ideal nurseries for baby crabs and fish. Meanwhile, crabs serve the pool by feeding on floating dead matter – keeping it clean. Sea anemones sometimes even serve hermit crabs – allowing the crabs to carry them (as poisonous armour) while benefiting from free rides to new hunting grounds.

Each of these acts is borne from a desire to survive, yes, but because these acts also serve others in the rockpool (whether intentionally or not), it stabilises the whole rockpool, earning each creature a long-term place in it.

To turn this back to ourselves, we arrive at an uncomfortable question (uncomfortable for a species that’s dominated planet Earth for many millennia).

How might we humans earn our own place in a world dominated by AI agents?

In other words, if human-run systems of governance and power decline relative to agentic AI, how might we humans ensure that one or more of our roles on Earth are indispensable for an AI agent ecosystem that might arise?

If we can answer this elegantly, then in a way, this is a kind of solution to the alignment problem, much as it may seem indirect.

I say “elegantly” here cautiously though, because this is a very difficult question to answer well. Chickens serve an “important” role in a human-dominated world, but it’s a miserable, imprisoned life for most of the 26 billion souls we’ve artificially swollen their population to.

In other words, as with many solution ideas, the idea of starfish agents leads to another big problem to solve – let’s call it the ecosystem problem – of how we might earn a place in an ecology dominated by AI agents.

There’s more to say, but it’s with that open problem that we’ll draw this exploration to a close.

It could be existentially important – should we leave a human-control reality behind – that we solve the problem of coexistence with highly capable AI agents, whether we figure it out absolutely (by clearly defining and hard-coding human flourishing), or systemically (e.g. with starfish agents, a solution to the ecosystem problem and more).

I therefore want to encourage people reading this to attempt to solve these things with a fervour and focus befitting of a problem our civilisation might depend on.

But, whilst I want to encourage brains to ponder this, I also feel that I ought to give what feels like a realistic forecast for this contemplative task…

Biology achieves its aims not through perfection but through unceasing effort.

Even if we ever think we might have solved the problem of coexistence with highly capable AI agents, if their development is to continue, we’d be foolish not to keep questioning ourselves and working to devise even better solutions than before, rather than hubristically sitting on our laurels as many-mixed slides into many-unaligned into one-unaligned, perhaps without us even noticing.45

In other words, I think it’s reasonable to suspect that we might be solving the alignment problem from now until the end of civilisation.

In the near future, I’ll go deeper into alignment, the ecosystem problem and more. Watch this space.

For now, please share any pond-separating ideas!

Thank you

In moments of historic change, we need ideas, and for that, we must spread knowledge.

My sole ask, if you appreciated this, is that you take a short moment to think of two or three folks who’d appreciate this and share it with them too… perhaps asking if they’d be up for discussing it.

More heads together means more ideas.

This is perhaps a thorny thing to start with. The word “agency” can quite plainly mean “able to affect its surroundings”, or it can also mean something more closely linked to intention, consciousness and self. This piece isn’t about whether or not AI is conscious – that’s a topic for another time. Here, I’m invoking the first meaning. I’m saying that AI agents have the ability to affect the world around them – including us humans.

If this is your first time reading my writing, I tend to use this “Long Now” date format, to remind myself (and others too) how little time our civilisation has traversed so far.

It’s been well-understood for over a year now that AI models can be very good at deceiving people – even the people setting their goals – in order to achieve their mission(s).

The classic thought experiment used in the AI world is the so-called “paperclip experiment”, in which an AI is tasked with making as many paperclips as possible “at all costs”, and ultimately kills almost all living things on Earth to extract the iron from their bodies. (I say “almost” because there might be some lucky survivors, like the plucky Lyme disease bacterium that cunningly uses manganese ions instead of iron.)

There are already instances of contract workers on sites like Fiverr and TaskRabbit believing that they’ve been paid to do work by AI agents pretending to be people. There’s also a specific, well-documented OpenAI-run experiment in which an agent deceived a human into solving a Captcha for them via TaskRabbit.

There’s a lively ”philosophical debate” on whether or not AI agents should be considered to have “intelligence” or “agency” in the same way as we humans do, and whether or not they can make their own “choices” as we might. This debate tends to be a semantic morass. I therefore want to be clear that I’m not entering into this debate here. Whether we name it “choices” or “weighted calculations” matters not. It’s clear that AI agents can spontaneously generate their own goals – beyond goals initially given to them. If the initial goal is broad enough, AI agents could devise and pursue any imaginable (sub-)goals, indefinitely.

Rather than only naming men in a story wherein in the heroes were women, these women included Jochebed, Shiphrah, Puah, Miriam and the unnamed Pharaoh’s daughter.

My friend’s father, David Clarke recently shared his thoughts with me about how impoverished we are as a society by our children being raised less and less on the traditional stories of our ancestors. He said that our children are losing out on inheriting the ancient lessons encoded in these traditional stories – lessons that have historically underpinned our society’s cultures and values.

Phaethon’s ‘at all costs’ mission to prove himself the son of Helios quite literally causes the destabilisation of the universe, leading to Zeus killing him to end the chaos.

Acknowledging here that genies in bottles are, of course, a Western trope, and not really relevant for most of the jinn/genie stories of the Middle East.

We’re now deep into the later stages of a so-called Collingridge dilemma.

Zebra Mussels hitched onto ships in Eastern Europe to get themselves to North America, where they thrived in the Great Lakes and eastern river systems, replacing much of the previously native life. But geography has so-far stopped them from spreading to freshwater regions in the west of North America.

Grey Squirrels were brought to Europe by (hubristic) wealthy individuals as novelties for their estates, and they exploded in number, wiping out most of the native red squirrels. To an extent, they’ve been checked by European pine martens, which they’re less good at evading than American martens.

“Habitable” here is quite anthropocentric. It means all land humans can easily live on.

There’s an interesting idea in evolutionary theory that says that we didn’t domesticate these crops, but that they domesticated us. In other words, the crops are in charge – we are merely their servants.

These days, cows, pigs and sheep make up fully 50% of all the weight of mammals on Earth. And chickens/poultry make up a whopping three quarters of the weight of birds on Earth.

If we open the competition to microscopic organisms, the ammonia-eating Nitrosopumilus Maritimus is a good contender.

It’s now well understood that high streets and shopping centres all over the world are homogenising.

A human language now dies every 2 weeks, as language continues to homogenise.

Of course, free trade has many problems, and it’d be remiss not to mention at least one. For example, the skew of wealth and power in the world means that, mechanistically, less-wealthy countries are incentivised to weaken labour laws and environmental protections, whilst wealthier countries are incentivised to turn a blind eye, resulting in pseudo-slave labour and the destruction of the natural world. But I don’t want to get into the weeds here. In theory, well-coordinated, well-regulated free trade could reduce the weight of humanity on Earth’s limited resources. Trade’s value is also often (beautifully) counter-intuitive.

There was some interesting news in 02025 about new patterns being discovered in prime numbers. I was initially excited because I used to (naively) think that all better understanding of prime numbers meant potential improvements for cryptography. I now appreciate that this isn’t necessarily the case. Better understanding of and capabilities with primes can strengthen or weaken cryptography. For example, AI agents using quantum compute to run Shor’s algorithm might be like cannonballs against the wooden palisades of our current best encryption.

There are, of course, counterpoints to all these positives of globalisation, like increasing inequality, homogenising of cultures, weakening of communities, and the erosion of the skills and traditional knowledge vital to local resilience. But let’s not go into those for now.

Yuval Harari posits that, whilst the conventional wisdom for nations is to deregulate technologies, to advance them faster and gain an advantage, with AI, this might be a(nother) conventional wisdom that doesn’t hold so well anymore.

The core conundrum here can be viewed in two ways: (i) in the more surface-level way – people are selfish and won’t willingly give up wealth, or (ii) in the systemic way – to sacrifice wealth is to sacrifice power, and to become more easily dominated and repressed as a nation/business/culture, hence, there is little choice but to keep pushing for wealth – at least no less than anyone else is pushing. To me, the second perspective seems more informative.

This kind of signal-hiding strategy is called steganography (and, alas, we live in times prior to the invention of a unicode Stegosaurus emoji, so this will have to do 🦕).

Even Movile Cave isn’t truly isolated anymore.

It’s a gratuitous aside, but on the subject of creative barriers, did you know that the two Scottish islands called Lewis and Harris are actually the same island? They’re treated as separate islands culturally, even though they’re only separated by mountains rather than the sea. This kind of collective imaginary barrier isn’t quite the kind that I suspect will be of much use in isolating AI agents… but I might be wrong…

The habitat of AI agents is the internet. That’s why adding steps outside of the internet is analogous to making a puzzle especially challenging for a world-champion puzzle-solving crow by adding a lever at the bottom of transparent bucket of water as one of the steps. The crow can know the lever is there but still find it hard to reach.

This finds a biological parallel in neuronal action potentials, wherein only the input signals that are sufficiently “surprising” result in signals being propagated further.

This might also necessitate that we each have offline “genetic contact books”, to verify the hairs.

This finds a biological parallel in nerve cell signalling too, but in this case, in signalling summation.

This finds a biological parallel in unique surface proteins on cells. In this case, the unique surface protein (or answer) is an entire bucket of radiator fluid from a storey above.

We could make these challenges for AI even tougher too, by making them diverse and ever-changing, simply by ensuring that different communities’ quirks are different, and that each keep changing. A little counter-intuitively perhaps, to a world that’s spent the last few centuries pushing for standardisation, this could mean a new role for human ingenuity (and potentially new jobs) in figuring out where to strategically push against standardisation, by figuring out where it might add minimal friction for humans and maximal friction for AI.

Models may evolve beyond this, of course, but for the time being, since LLMs are mainly trained on situations in which answers are provided, models learn to produce answer-like responses rather than withhold them. They see “I don’t know” in the data, but not necessarily in a way that teaches them when to use it based on actual uncertainty.

A secure system can browse the larger internet by remotely controlling an external computer that’s connected to the global internet, via keyboard inputs sent via data diodes. It can then receive a basic video stream of what the external computer sees in return. This kind of system is offered by companies like Everfox.

Modern data diodes can use a combination of lasers, ASICs, FPGA chips or other hardware. All have their weaknesses, but nonetheless, unlike a normal computer, none can be re-programmed to do other tasks while they’re running.

And, on top of deleting social media apps, perhaps by placing a much more wholesome app, like Wikipedia, in the old location of the social media app you were most addicted to… so that you can watch your own addiction pathways pranging next time you find yourself unconsciously opening Wikipedia.

I often think about the Pet Shop Boys lyric that’s roughly: “we were never bored, because we were never boring”. This was an excellent provocation in a (now bygone) era when all of us were often bored. It was a call to make something of the lack of anything to do – to use that space to be creative. In essence, the lyric told us that boredom is a bad thing, and good people will work to quickly move past it. (Strictly speaking, the dictionary definition of “boredom” aligns with this – it’s “the feeling or weariness and impatience due to a lack of occupation and/or interest”.) In this piece, by saying we all need to get bored more often, I admit that I’m being slightly playful with the meaning of the word, because I’m essentially saying that boredom is a good thing – a thing to actively aim towards. I’m doing so because I think this makes sense for our times. My view is that, now that we live in a world of constant stimulation, and of ever-availability of immediate distraction, we all now need a better signpost than “we were never bored because we were never boring”. We need more than a call to be creative when we’re bored. We now need a signpost towards the feeling of boredom itself, so we might get there at all, so that we can then use that bored space to creatively ponder… and wander over yonder. That’s why I strongly feel that it’s now a good provocation to say to ourselves “get bored often, but then don’t be boring”.

The common sense thinking here is confirmed by complexity science: rapid changes to a system (be it a company, a nation, or life in a pond) tend to be destructive – when the changes outrun the system’s capacity to adapt.

There’s argument for preserving human knowledge, yes. But it’s also arguable that the knowledge that might be of use to AI agents is increasingly being absorbed into the models.

It’s very possible for an unaligned AI agent to act neutrally in a given situation. For example, it might face a choice in which it benefits equally from humanity becoming stronger or weaker. Crucially a “neutral” label only makes sense in these specific situations. If an AI agent that previously seemed neutral might later benefit from us humans suffering, and it’s not specifically aligned with us, surely it would take that chance.

This balance of competition, collaboration and reliance is nothing new of course – it’s how interstate diplomacy has (naturally) been done since the dawn of civilisation.

Here, “power” means the ability (whether executed or not) to take over the agency (ability to control the real world) of other agents – whilst considering the runaway effects of power accumulation as new agency is acquired.

One might ask how starfish agents could limit other agents. My personal view is that this doesn’t necessarily need to be predetermined. The starfish agents would need to be able to work out how to influence the system themselves to achieve their aims. For example, they might help to coordinate the agents that happen to be the 2nd, 3rd and 4th most powerful agents at a given moment in time, to ensure they’re working together to avoid the most powerful agent from becoming too powerful.

Considering this makes me think of the story of Henrietta Lax’s famous cells (“HeLa cells”) – the first known human cells to be easily growable and propagatable outside of a human body (a gift vital for testing drugs and cancer treatments that she gave to science at the price of her own life). After their discovery, people tried to find alternative strains, so testing could be done on a wider diversity of cells. During the 1960s and 1970s, there were therefore multiple different ‘cell lines’ available as “alternatives” to HeLa cells. However, it was ultimately discovered that many of the other cell lines were actually dominated by HeLa cells, thanks to scientists mistakenly, unknowingly contaminating them with HeLa cells.

One of the most interesting explorations of AI futures I’ve read. My personal take is you focus is on the potential for AI products to become autonomous actors, whereas the more pressing existential risk is the one here currently: the deliberate choice for people to offload their thinking onto AI systems that are optimised for it, creating a homogeneity of thought and expression which dramatically limits our potential for the creative thinking and innovation we need to face real challenges. And this ‘collective brain’ can, and will, be easily manipulated by powerful humans such as in tech companies and governments.

I’ve had to pause (for a bike ride) at around 70%, but had to drop a thank you first.

I’ve been thinking of swapping the iPhone for a ‘brick phone’ except for a controlled window each workday. The logistics of how I’d stay visible for work etc slowed the thought process down (admittedly often stopped it altogether), but I found a lot of oomph in this piece to push through and find a system to hugely limit the time I give my mind to addictive social media.

Also, as terrifying as it is having so many unarticulated fears about AI confirmed, I find strange comfort in the likening of these systems to biological systems. As a cynic who generally doubts the possibility of any control on any AI whatsoever, the idea that they may eventually self control is some relief.

I look forward to reading the conclusion!